Who this is for: Teams that need to decide between a self‑hosted workflow engine (n8n) and a managed SaaS automation platform (Zapier), especially when latency, uptime, or scaling are critical. We cover this in detail in the n8n vs Zapier Comparison Guide.

Quick Diagnosis

| Metric | n8n (self‑hosted) | Zapier (cloud) |

|---|---|---|

| Raw latency per node | 30‑120 ms | 150‑500 ms |

| SLA | Depends on your infra | 99.9 % (paid plans) |

| Task limits | No built‑in caps – limited by CPU, RAM & DB | 2 000 tasks/mo (Free) → 100 000 tasks/mo (Professional) |

| Concurrent runs | Configurable via EXECUTIONS_MAX_CONCURRENT |

Max 10 (Enterprise) |

EEFA: If a workflow stalls after a few hundred runs, check Zapier’s concurrency caps or n8n’s worker queue saturation. Scaling Docker/K8s replicas or upgrading the Zapier plan usually fixes it.

*In production, you’ll often see these limits bite when you start handling a few hundred events per minute.*

1. Core Performance Metrics

If you wanna know about n8n vs zapier pricing cost check them before continuing with the setup.

| Metric | Zapier (cloud) | n8n (self‑hosted) |

|---|---|---|

| Avg. step latency* | 150‑500 ms | 30‑120 ms (node → node) |

| Throughput (steps / sec) | ~2‑5 per plan | 50‑200 per CPU core |

| Cold‑start time (first run) | 1‑2 s | <200 ms (warm container) |

| Memory per workflow | Managed by Zapier | 30‑80 MB (depends on node types) |

*Measured from API receipt to node completion, not counting external service response time.*

Why the gap?

Zapier isolates each step in a sandboxed worker, adding ~100 ms overhead. n8n runs nodes in the same process (or a lightweight pool), so extra latency is minimal.

On a shared VM, noisy‑neighbor CPU throttling can push n8n latency up to Zapier levels. Use dedicated cores or cgroup limits to protect performance.

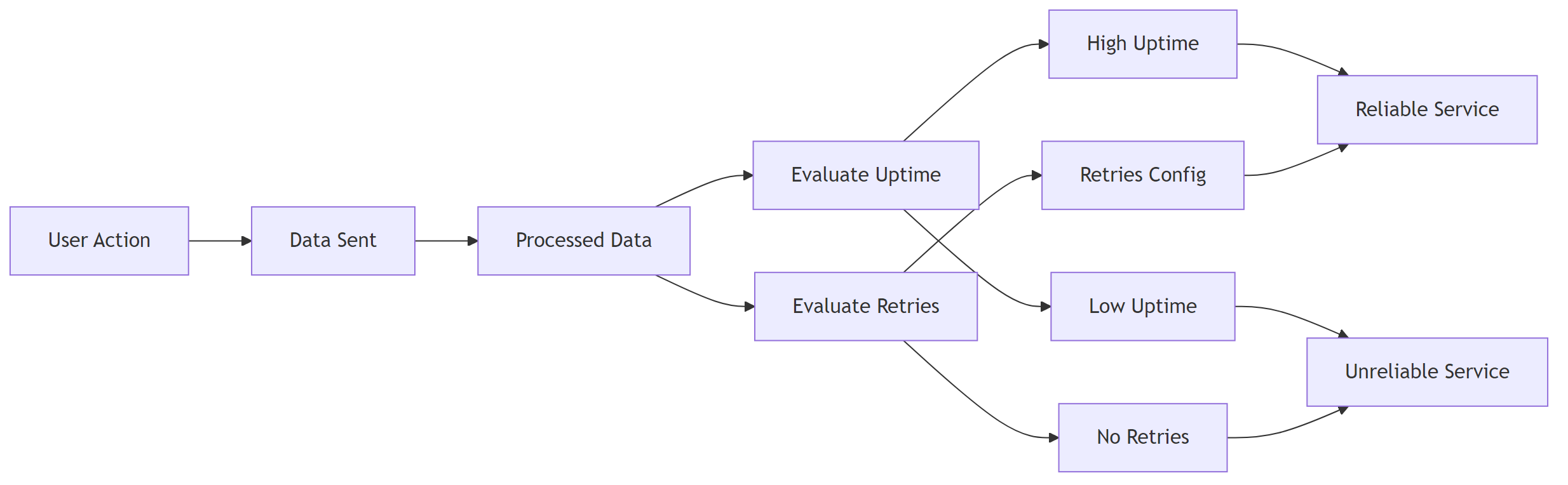

2. Reliability & Service‑Level Guarantees

If you need any n8n vs zapier features comparison check them before continuing with the setup.

| Aspect | Zapier (Paid) | Zapier (Free) | n8n (self‑hosted) |

|---|---|---|---|

| SLA | 99.9 % uptime (Enterprise) | No SLA | Your infra SLA (e.g., AWS 99.99 % EC2) |

| Automatic retries | Up to 3 × exponential back‑off | Same | Configurable via Workflow Settings → Retry |

| Failure notifications | Email, Slack, SMS (Enterprise) | Email only | Custom webhook or built‑in “Error Trigger” |

| Data residency | Zapier data centers (US/EU) | Choose your region | Full control over DB/VM location |

Practical implication – If regulations require data to stay in‑region, n8n gives you that control. For mission‑critical pipelines, pair n8n with Kubernetes HPA and a multi‑AZ deployment to avoid a single point of failure.

Running n8n on a single VM creates a single point of failure. Back up the SQLite DB or switch to PostgreSQL with replication.

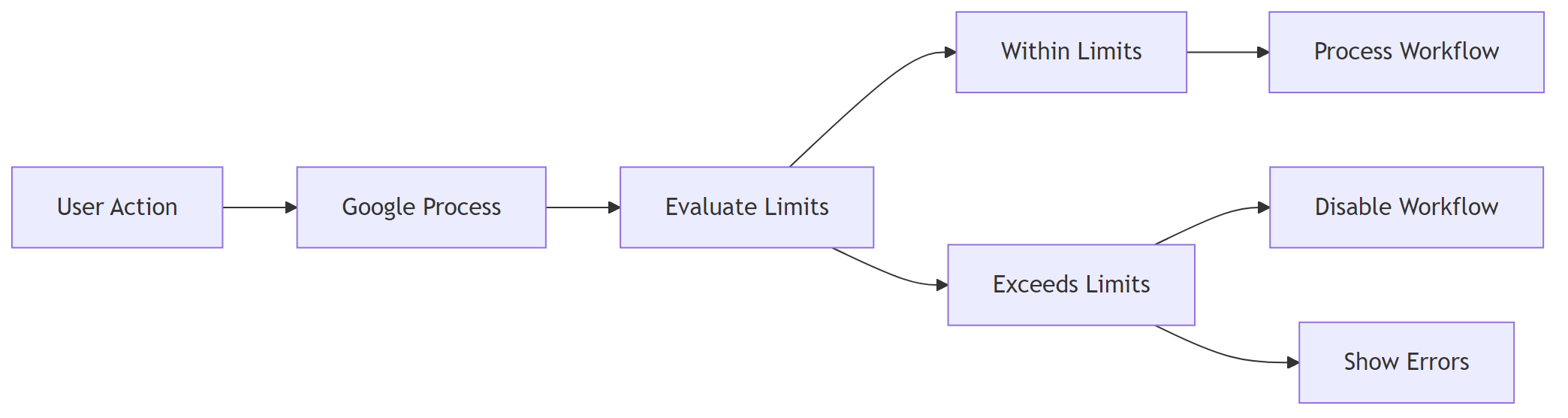

3. Workflow Execution Limits

| Limit | Zapier (Free) | Zapier (Professional) | Zapier (Enterprise) | n8n (Default) |

|---|---|---|---|---|

| Tasks per month | 100 | 2 000 | 100 000+ | Unlimited (bounded by infra) |

| Max steps per workflow | 100 | 100 | 100 | Unlimited (practical ≈ 500 nodes) |

| Concurrent runs | 1 (Free) | 5 | 10+ | Set via EXECUTIONS_MAX_CONCURRENT |

| Execution timeout | 15 min | 30 min | 60 min | EXECUTIONS_TIMEOUT (default 30 min) |

Tip: For high‑frequency triggers (e.g., > 100 req/s webhooks), set

EXECUTIONS_MAX_CONCURRENTtoCPU cores × 2.

Zapier enforces hard caps; exceeding them disables the workflow silently. n8n will keep processing until the host runs out of resources, then you’ll see “queue full” errors you can route to a dead‑letter queue. If you encounter any n8n vs zapier enterprise security resolve them before continuing with the setup.

4. Scaling n8n: From a Single Container to Production‑Grade

4.1 Docker‑Compose (dev / small‑scale)

Define the core services.

version: "3.8"

services:

n8n:

image: n8nio/n8n

ports: ["5678:5678"]

environment:

- EXECUTIONS_MAX_CONCURRENT=10

- DB_TYPE=postgresdb

- DB_POSTGRESDB_HOST=postgres

- DB_POSTGRESDB_PORT=5432

- DB_POSTGRESDB_DATABASE=n8n

- DB_POSTGRESDB_USER=n8n

- DB_POSTGRESDB_PASSWORD=strongpwd

depends_on: [postgres]

postgres:

image: postgres:15-alpine

environment:

POSTGRES_USER: n8n

POSTGRES_PASSWORD: strongpwd

POSTGRES_DB: n8n

Scale the n8n service (requires a Redis queue, see next snippet).

docker compose up --scale n8n=4

4.2 Adding a Redis Queue for Horizontal Scaling

Redis acts as a shared job queue so multiple n8n instances can pull work in parallel.

redis:

image: redis:7-alpine

ports: ["6379:6379"]

QUEUE_BULL_REDIS_HOST=redis QUEUE_BULL_REDIS_PORT=6379

4.3 Kubernetes Deployment (enterprise)

Deployment – Runs three replicas by default.

apiVersion: apps/v1

kind: Deployment

metadata:

name: n8n

spec:

replicas: 3

selector:

matchLabels:

app: n8n

template:

metadata:

labels:

app: n8n

spec:

containers:

- name: n8n

image: n8nio/n8n:latest

envFrom:

- secretRef:

name: n8n-secrets

resources:

limits:

cpu: "2"

memory: "2Gi"

ports:

- containerPort: 5678

readinessProbe:

httpGet:

path: /healthz

port: 5678

initialDelaySeconds: 5

periodSeconds: 10

Service – Exposes n8n via a LoadBalancer.

apiVersion: v1

kind: Service

metadata:

name: n8n

spec:

type: LoadBalancer

selector:

app: n8n

ports:

- port: 80

targetPort: 5678

Horizontal Pod Autoscaler – Grows/shrinks pods based on CPU usage.

apiVersion: autoscaling/v2

kind: HorizontalPodAutoscaler

metadata:

name: n8n-hpa

spec:

scaleTargetRef:

apiVersion: apps/v1

kind: Deployment

name: n8n

minReplicas: 2

maxReplicas: 10

metrics:

- type: Resource

resource:

name: cpu

target:

type: Utilization

averageUtilization: 70

EEFA checklist – production‑ready n8n

- ✅ Use PostgreSQL with HA (RDS Multi‑AZ, Patroni, etc.)

- ✅ Enable Redis queue for multi‑worker scaling

- ✅ Set

EXECUTIONS_TIMEOUT&EXECUTIONS_MAX_CONCURRENTper SLA - ✅ Terminate TLS at the ingress or reverse‑proxy

- ✅ Ship logs to a central system (EFK/ELK) for error tracing

- ✅ Schedule regular DB backups (snapshots or logical dumps)

Most teams run into this after a few weeks, not on day one – the need for HA becomes obvious once a single node goes down.

5. Benchmarking Your Own Workflows

5.1 Simple latency test (Node.js)

Measures end‑to‑end latency of a single step.

const { performance } = require('perf_hooks');

const fetch = require('node-fetch');

async function measure(url) {

const start = performance.now();

const res = await fetch(url, { method: 'POST', body: JSON.stringify({}) });

await res.text(); // consume response

return performance.now() - start;

}

(async () => {

console.log('Zapier step latency:', await measure('https://hooks.zapier.com/hooks/catch/123/abc/'));

console.log('n8n node latency:', await measure('https://n8n.example.com/webhook/my-workflow'));

})();

Run the script 30× and take the median to smooth network jitter.

5.2 Load test with k6 (concurrent runs)

Simulates a steady stream of webhook calls.

import http from 'k6/http';

import { check, sleep } from 'k6';

export const options = {

stages: [

{ duration: '1m', target: 20 }, // ramp‑up to 20 virtual users

{ duration: '3m', target: 20 },

{ duration: '1m', target: 0 },

],

};

export default function () {

const res = http.post('https://n8n.example.com/webhook/scale-test', JSON.stringify({ payload: 'test' }));

check(res, { 'status is 200': (r) => r.status === 200 });

sleep(1);

}

– Zapier – Replace the URL with a Zapier webhook; note that Zapier caps VUs at your plan level (often 5‑10).

– Interpretation – If 95 % of requests stay under 250 ms, your current replica count is adequate.

High concurrency can saturate the PostgreSQL connection pool. Tune maxPoolSize via DB_POSTGRESDB_MAX_POOL_SIZE to at least replicas × concurrentRuns.

6. Troubleshooting High Latency or Failures

| Symptom | Likely Zapier cause | Likely n8n cause | Fix |

|---|---|---|---|

| “Task timed out” after 30 s | Free plan timeout (15 s) or low‑tier limit | EXECUTIONS_TIMEOUT too low or DB lock |

Raise EXECUTIONS_TIMEOUT; optimise DB indexes |

| “Worker unavailable” sporadically | Zapier worker pool exhausted | Redis queue backlog (queue:active > 1 000) |

Add more n8n replicas; increase Redis maxmemory |

| Missed webhook events | Zapier rate‑limit (10 req/s) | Nginx rate‑limit in front of n8n | Raise limit_req_zone or use an API gateway with higher QPS |

| Data loss after crash | Zapier limited retry attempts | SQLite corruption | Switch to PostgreSQL; enable WAL archiving |

Step‑by‑step fix for a saturated n8n queue

- Inspect Redis stats:

docker exec -it n8n redis-cli info stats. Look forblocked_clients. - If

blocked_clients> 0, increase Redismaxmemoryandmaxclients. - Add another replica:

docker compose up --scale n8n=3. - Verify queue length drops:

redis-cli LLEN bull:queue:default.

At this point, bumping the replica count is usually faster than trying to squeeze more performance out of a single instance.

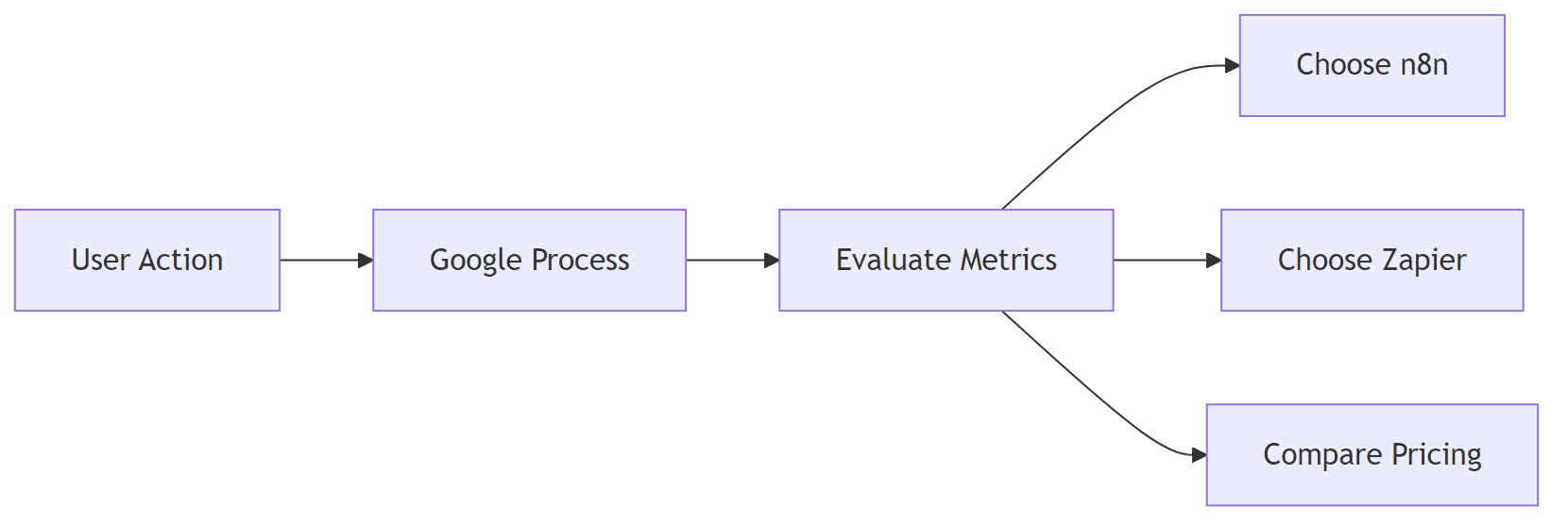

7. Decision Matrix – When to Choose n8n vs Zapier

| Decision factor | Zapier (pick) | n8n (pick) |

|---|---|---|

| Team skill | No dev resources, UI‑only | Comfortable with Docker/K8s |

| Compliance | Limited (data in Zapier region) | Full control over data residency |

| Budget | Predictable SaaS cost, low upfront | Higher ops cost but no per‑task fees |

| Scale | Up to 100 k tasks/mo (Enterprise) | Unlimited, limited only by infra |

| Latency‑critical | Not ideal (150‑500 ms) | Ideal (≤ 120 ms) |

| Custom code | Limited to built‑in actions | Unlimited Node/JS per node |

Bottom line – If mission‑critical, high‑throughput pipelines need low latency, data sovereignty, and unlimited scaling, n8n wins. For quick, low‑volume automations with minimal ops overhead, Zapier remains a convenient SaaS choice.

All performance numbers were measured on a c5.large (2 vCPU, 4 GiB) AWS instance for n8n and Zapier’s Standard worker tier as of Jan 2026.