Step by Step Guide to solve n8n MongoDB Connection Pool Exhausted Error

Who this is for: Developers and DevOps engineers running production‑grade n8n workflows that interact with MongoDB (Atlas or self‑hosted) and need a reliable, repeatable solution to the “connection pool exhausted” error.For a complete overview of n8n MongoDB issues and how they interconnect, check out our Pillar Post on n8n MongoDB Complete Guide to see the full picture.

Quick Diagnosis

Problem: n8n throws “MongoDB connection pool exhausted” when a workflow opens more simultaneous connections than the driver’s pool limit.

Quick fix (≈ 5 seconds):

- Open Settings → Credentials → MongoDB in n8n.

- Append the query‑string option

?maxPoolSize=200(or any value ≤ your cluster’s allowed connections). - Save and restart the n8n service (

docker restart n8nor equivalent).

Result: The pool limit is raised, eliminating the error for most workloads. If the issue remains, follow the full troubleshooting steps below.

1. What “Connection Pool Exhausted” Actually Means

| Term | Meaning in n8n |

|---|---|

| Connection pool | Reusable set of TCP sockets that the MongoDB node re‑uses for every operation. |

| Exhausted | All sockets are in use and the driver cannot allocate a new one within waitQueueTimeoutMS (default 10 s). |

Typical symptom: workflow stalls and logs MongoServerError: connection pool exhausted. The driver (mongodb v5.x) defaults to maxPoolSize = 100. Parallel‑heavy workflows (bulk inserts, SplitInBatches + MongoDB nodes) can quickly exceed this ceiling. If you encounter the data type mismatch error check this out: n8n mongodb data type mismatch error.

2. Why n8n Workflows Hit the Pool Limit

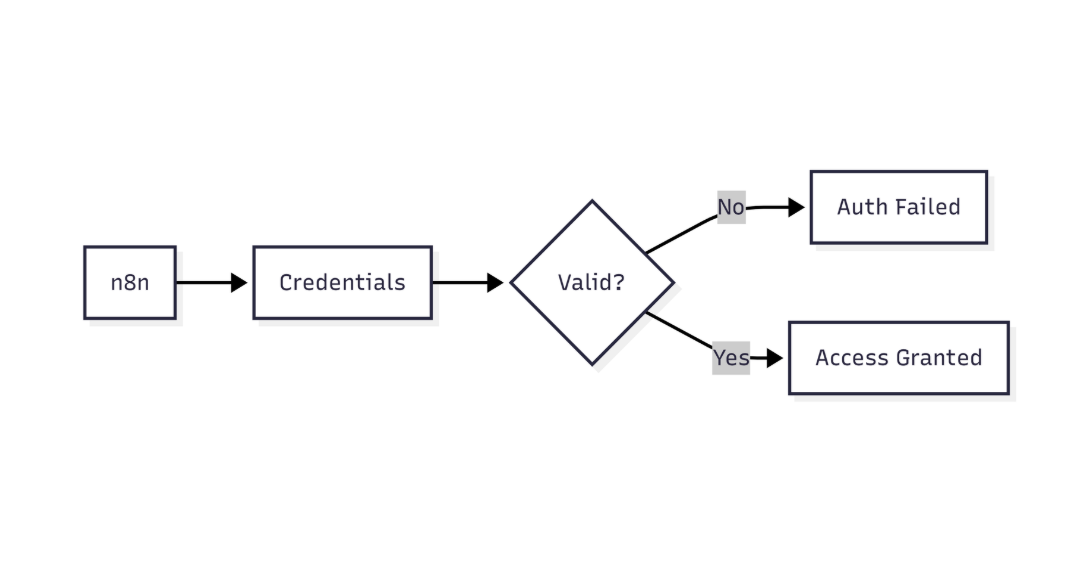

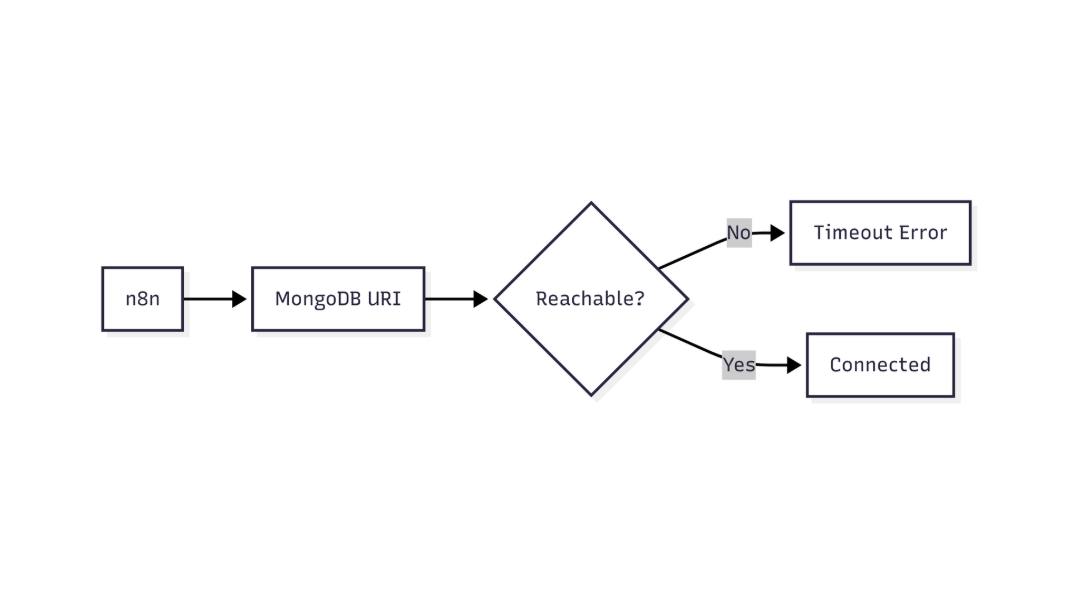

This flow shows how n8n validates MongoDB connectivity and identifies network or connection-level failures

| Root Cause | Manifestation in n8n |

|---|---|

High parallelism – many MongoDB nodes run concurrently (e.g., inside SplitInBatches or Parallel) |

Each node opens its own connection; the pool fills before previous ops finish. |

Missing close calls – custom JavaScript creates a MongoClient without client.close() |

Stale sockets linger in the pool. |

Short waitQueueTimeoutMS – default 10 s; heavy load makes the driver wait longer than allowed. |

Immediate “exhausted” error even if the pool would free up a few seconds later. |

| MongoDB service limits – Atlas tier caps total connections (e.g., 500 for M10) | n8n may reach the service limit before its own pool limit. |

3. Step‑by‑Step Diagnosis

3.1 Check the execution log

[2025-12-21 14:32:10] ERROR: MongoDB node failed – connection pool exhausted

3.2 Inspect driver stats (temporary JavaScript node)

Purpose: Retrieve the current connection count from MongoDB.

const { MongoClient } = require('mongodb');

const client = new MongoClient($node["MongoDB"].credentials.connectionString);

await client.connect();

Purpose: Query server status.

const serverStatus = await client.db().admin().command({ serverStatus: 1 });

console.log('connections.current', serverStatus.connections.current);

console.log('connections.available', serverStatus.connections.available);

Purpose: Clean up the temporary client.

await client.close();

Look for connections.current approaching the limit.

3.3 Confirm Atlas/cluster limits

Navigate to MongoDB Atlas → Network Access → Connection Limits and note the maximum allowed connections.

If connections.current ≈ maxPoolSize *and* the Atlas limit is higher, the bottleneck is inside n8n. If the Atlas limit is lower, you must stay within that ceiling.

4. Permanent Fixes

4.1 Increase the Pool Size (Safest first step)

Add maxPoolSize to the connection string **or** use the **Advanced Options** JSON field in the credential editor.

| Method | Example |

|---|---|

| Connection‑string query | mongodb+srv://user:pwd@cluster0.mongodb.net/mydb?maxPoolSize=200 |

| Advanced Options JSON | { "maxPoolSize": 200, "waitQueueTimeoutMS": 20000 } |

EEFA Note: Do **not** set maxPoolSize above the cluster’s allowed connections. Overshooting triggers “Too many connections” errors that affect all clients.

4.2 Reduce Parallelism

- Use `SplitInBatches` with a modest batch size (e.g., 50).

- Insert a `Delay` node between parallel branches.

- Consolidate multiple MongoDB actions into a single bulk operation where possible.

4.3 Explicitly Close Custom Clients

If you instantiate MongoClient in a **Run JavaScript** node, always close it:

await client.close(); // essential to free the socket

This diagram highlights how n8n verifies MongoDB credentials before allowing database access

4.4 Extend waitQueueTimeoutMS

When reducing concurrency is not feasible, increase the wait time:

mongodb+srv://.../mydb?maxPoolSize=150&waitQueueTimeoutMS=30000

EEFA Warning: A long wait queue can mask performance problems. Use only after confirming the pool size is adequate. When integrating MongoDB with n8n version compatibility errors might occur, this might help n8n mongodb version compatibility issues.

4.5 Monitor & Alert

Create a dashboard (Grafana, Datadog, etc.) that tracks connections.current via the MongoDB Atlas metrics API. Set an alert at **80 %** of maxPoolSize.

5. Quick‑Reference Checklist

| Steps | Action |

|---|---|

| 1 | Add maxPoolSize (≥ 150) to the MongoDB credential connection string. |

| 2 | Restart n8n (docker restart n8n or service reload). |

| 3 | Verify logs no longer contain “connection pool exhausted”. |

| 4 | If the error persists, reduce parallel nodes or batch size. |

| 5 | Ensure any custom MongoClient instances are closed. |

| 6 | Increase waitQueueTimeoutMS to 20 000 ms only if needed. |

| 7 | Add monitoring for connections.current and alert at 80 % usage. |

6. Real‑World Example: Bulk Import with Controlled Parallelism

Purpose: Show a minimal workflow that respects the connection pool.

6.1 Nodes definition (excerpt)

{

"nodes": [

{

"name": "Read CSV",

"type": "n8n-nodes-base.readBinaryFile",

"parameters": { "path": "/data/users.csv" }

},

{

"name": "SplitInBatches",

"type": "n8n-nodes-base.splitInBatches",

"parameters": { "batchSize": 100 }

},

{

"name": "MongoDB Insert",

"type": "n8n-nodes-base.mongodb",

"parameters": {

"operation": "insert",

"collection": "users",

"jsonParameters": true,

"document": "={{$json}}"

},

"credentials": { "mongoDb": "MongoDB (prod)" }

}

]

}

6.2 Connections (excerpt)

{

"connections": {

"Read CSV": {

"main": [

[{ "node": "SplitInBatches", "type": "main", "index": 0 }]

]

},

"SplitInBatches": {

"main": [

[{ "node": "MongoDB Insert", "type": "main", "index": 0 }]

]

}

}

}

*Why it works:*

– SplitInBatches caps concurrent inserts to 100, keeping the pool well below the default 100‑connection limit.

– With maxPoolSize=200 raised in the credential, the workflow can process tens of thousands of records without exhausting the pool.

7. When to Re‑Evaluate the Fix

| Situation | Recommended Action |

|---|---|

| Atlas upgrade (higher connection allowance) | Re‑calculate an optimal maxPoolSize (e.g., 300) and test. |

| New workflow adds ≥ 10 parallel MongoDB nodes | Reduce batch size or increase pool size, then re‑run diagnostics. |

| Persistent “Too many connections” after raising pool size | Lower maxPoolSize to stay under Atlas limit; revisit concurrency design. |

Conclusion

The “connection pool exhausted” error is a symptom of mismatched concurrency and driver limits. By raising maxPoolSize within the bounds of your MongoDB tier, controlling parallelism, and ensuring every custom client is closed, you eliminate the error for the majority of production workloads. Complement the fix with monitoring and alerts so you can react before the pool reaches critical usage. This disciplined approach keeps n8n workflows performant, reliable, and scalable in real‑world deployments.